Deep Reinforcement Learning Interception Simulation

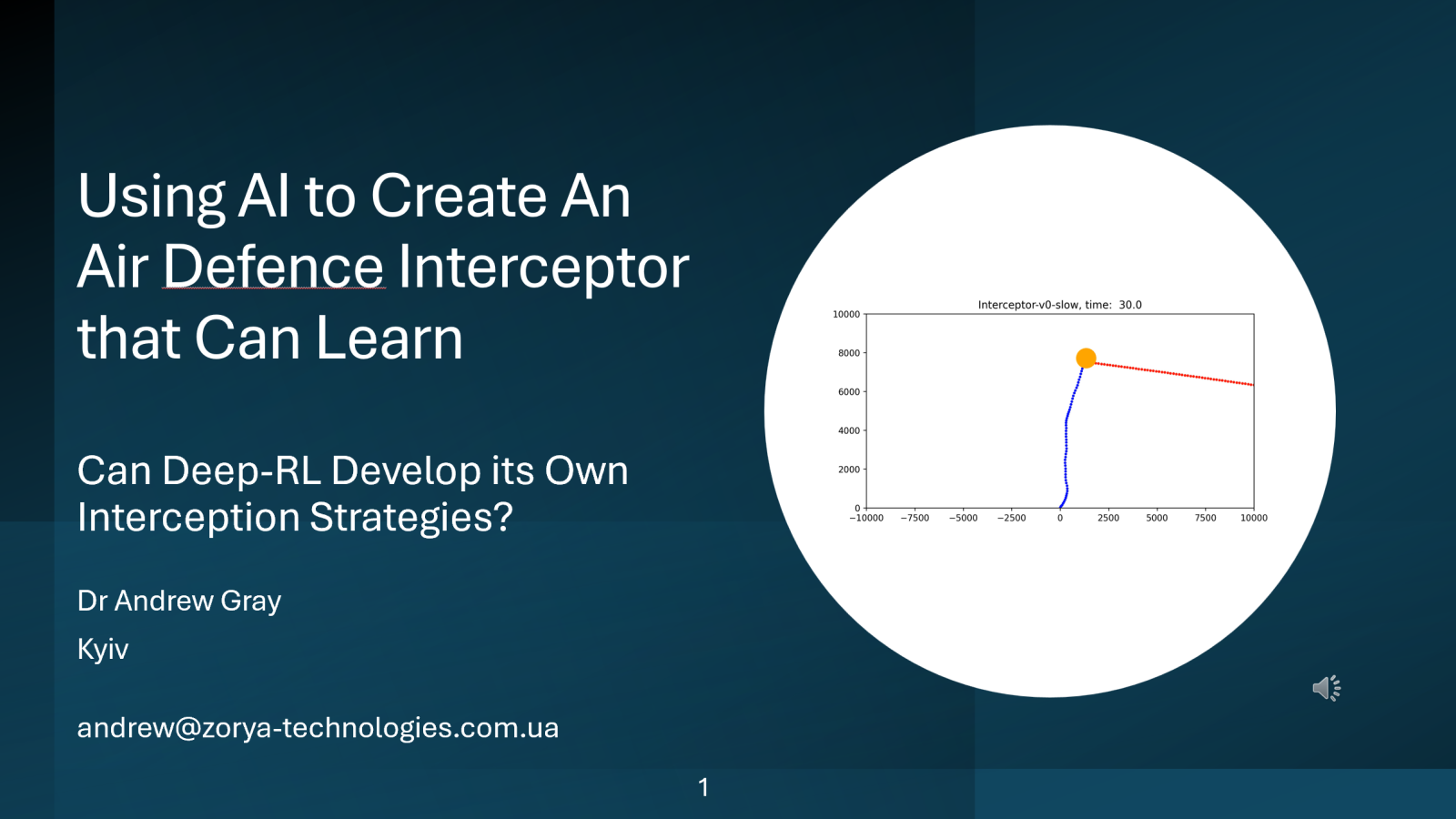

A deep reinforcement learning AI agent learns to intercept a moving ballistic target in a simulated 2D environment.

WatchOverview

This talk is about how a deep reinforcement learning (RL) AI agent can learn interception policies within a simulated 2D environment.

The task is formulated as a continuous control problem in which an autonomous agent learns to reach a moving target that follows a ballistic trajectory.

We will see that the AI agent develops successful interception policies on its own, and these vary according to the relative performance characteristics of the interceptor and the target.

To watch the computer simulations properly, this video should be viewed on a large screen, such as a laptop or tablet.

Different Language Versions

The narration for this presentation is being done by an AI that I have trained to use my voice, and to translate my speech into other languages. This AI produces a very good likeness of my voice in English, but I am told that it is less good in some other languages.

Different languages can be selected using the Dropdown Language Selector at the top of the page.

Contents

- The Interception Problem & Strategies

- An Interceptor that can Learn from Experience

- Deep Reinforcement Learning

- Training an Interceptor in a 2-D Simulation

- Interception Strategies After Training

- Summary and Conclusions

The Interception Problem

The goal of this talk is to show how deep RL can learn interception behaviour in a simulated environment.

In the examples that I will show, the target follows ballistic motion, with its trajectory parameters being chosen randomly in each simulation. This means that the AI agent must develop a general interception policy that is effective for a wide range of possible target trajectories.

In the training simulations, the chance of a successful interception is computed probabilistically, based on the distance between the target and the interceptor at the moment of detonation. Zero separation gives one hundred percent probability of success, and this probability drops to zero as the separation increases.

Can the AI Agent Learn Effective Interception Strategies?

We will see that using Deep-RL, the AI agent successfully learns an effective interception policy during training. The type of strategy developed by the AI depends strongly on the maximum speed and acceleration of the missile relative to the target.

This type of framework can be extended to more general multi-agent systems involving both pursuit and evasion, where the AI Agent learns how to pursue and intercept the target, whilst the target is also trying to evade the interceptor.